This example uses svm.SVC(kernel='linear'), while my classifier is LinearSVC. Plt.scatter(X, X, c=Y, cmap=plt.cm.Paired) Plt.scatter(clf.support_vectors_, clf.support_vectors_, 1.4.E: Lines, Planes, and Hyperplanes (Exercises) Dan Sloughter. # plot the line, the points, and the nearest vectors to the plane Simply train svm and plot it forcing 'pca' visualization, like here. If you are not familiar with underlying linear algebra you can simply use gmum.r package which does this for you. # plot the parallels to the separating hyperplane that pass through the You can obviously take a look at some slices (select 3 features, or main components of PCA projections). Then I tried to plot as suggested on the Scikit-learn website: # get the separating hyperplane Here the classifier: from sklearn.svm import LinearSVCĬlf = LinearSVC(C=0.2).fit(X_train_tf, y_train) Note that I am working with natural languages before fitting the model I extracted features with CountVectorizer and TfidfTransformer. Z = lambda x,y: (-clf.intercept_ef_*x ef_*y) / clf.coef_Īx = fig.add_subplot(111, projection='3d')Īx.plot3D(X, X, X,'ob')Īx.I am trying to plot the hyperplane for the model I trained with LinearSVC and sklearn. After fitting the data using the gridSearchCV I get a classification score of about. However, you can use 2 features and plot nice decision surfaces as follows. Modified 5 years, 11 months ago Viewed 689 times 5 I'm currently using svc to separate two classes of data (the features below are named data and the labels are condition). This is because the dimensions will be too many and there is no way to visualize an N-dimensional surface. # The equation of the separating plane is given by all x so that np.dot(svc.coef_, x) + b = 0. 1 Answer Sorted by: 12 You cannot visualize the decision surface for a lot of features. X = iris.data # we only take the first three features. A truly original idea, very helpful in a lot of situations and beautifully crafted.

Hyperplan remains the most ingenious app I’ve seen in the last years. Plot_contours(ax, clf, xx, yy, cmap=plt.cm.coolwarm, alpha=0.8)Īx.scatter(X0, X1, c=y, cmap=plt.cm.coolwarm, s=20, edgecolors='k')Ĭase 2: 3D plot for 3 features and using the iris dataset from sklearn.svm import SVC From only 40 / 25 / 33 (one-time fee) No-risk 60 day money back guarantee. Draw a random test point You can click inside the plot to add points and see how the hyperplane changes (use the mouse wheel to change the label). Then I tried to plot as suggested on the Scikit-learn website: get the separating hyperplane w clf.coef 0 a -w 0 / w 1 xx np.linspace (-5, 5) yy a xx - (clf.intercept 0) / w 1 plot the parallels to the separating hyperplane that pass through the support vectors b clf.supportvectors 0 yydown a xx + (b 1. LeschantillonsLeschantillons entours correspondent aux vecteurs supports Source publication Extraction and. from publication: Caractérisation de lenvironnement. 2-Hyperplan sparateur optimal qui maximise la marge dans l'espace de redescription.

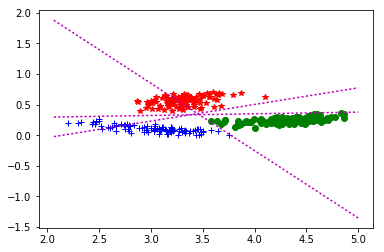

This hyperplane could be found from these 3 points only. Download scientific diagram 6-Schéma explicatif de la méthode SVM. Title = ('Decision surface of linear SVC ') The optimal separating hyperplane has been found with a margin of 2.00 and 3 support vectors.

Xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))ĭef plot_contours(ax, clf, xx, yy, **params): X = iris.data # we only take the first two features. Case 1: 2D plot for 2 features and using the iris dataset from sklearn.svm import SVC I have also written an article about this here: However, you can use 2 features and plot nice decision surfaces as follows. You cannot visualize the decision surface for a lot of features.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed